Every enterprise AI project runs into the same issue.

It’s not the model. It’s not even the data.

It’s the meaning behind the data.

There’s a familiar moment in almost every rollout.

The pipelines are in place. The warehouse is clean. The LLM is connected. A business user asks a simple question:

“What was our net revenue retention last quarter?”

And the result is off. Or vague. Or the system refuses to answer.

Nothing is technically broken. The data exists. The model is working.

But the AI doesn’t understand what “net revenue retention” actually means for that company—how it’s calculated, which tables it pulls from, what gets excluded, or how it’s defined internally.

What’s missing is a semantic model in AI—a shared layer that defines how data should be interpreted, not just accessed.

Without it, AI is left to guess.

That disconnect between raw data and business meaning is the semantic layer problem. And it’s one of the biggest blockers to enterprise AI today.

What a Semantic Layer Is and How It Works in Modern Data Architecture

A warehouse is full of tables and columns:

fct_revenue_daily, arr_bookings_net, customer_id.

These are optimized for storage and querying, not for understanding.

They don’t explain:

- what a metric represents

- how it should be calculated

- how different tables relate

- or how the business actually uses the data

The semantic layer fills that gap.

It defines:

- what a metric means

- how it’s calculated

- what filters apply

- which fields are dimensions vs facts

- how data joins together

This isn’t just documentation. It’s structured, executable logic that downstream systems—BI tools, AI agents, query engines—can use to produce consistent answers without relying on a human to interpret the data.

Without it, the warehouse is technically correct, but operationally ambiguous.

Why Semantic Layers Break in Modern Data Stacks

The idea of a semantic layer isn’t new.

There are already multiple approaches:

- LookML

- dbt semantic models

- Snowflake semantic views

- Unity Catalog

- Power BI’s semantic layer

So the issue isn’t a lack of tooling.

The issue is how those tools are used.

Today, semantic definitions are typically created separately from the physical data model.

In practice, that means:

- One person designs the schema

- Another writes semantic definitions manually

- Every schema change requires manual updates to those definitions

At small scale, this is manageable. At enterprise scale with hundreds of tables and dozens of metrics, it becomes unworkable.

Semantic layers fall out of sync.

Definitions reference outdated columns.

Joins no longer match production logic.

Metrics drift from how the business actually calculates them.

When AI relies on outdated semantics, it produces outdated answers. And once trust is lost, it’s hard to regain.

The Root Cause of Semantic Layer Drift in Data Architecture

Most implementations treat the semantic layer as something built after the data model—maintained separately, in a different tool, often by a different workflow.

That separation guarantees drift.

If the schema and its meaning are managed independently, they will diverge over time. No amount of coordination fixes that.

To keep semantics accurate, they have to be tied directly to where the schema is created and updated.

The same person making a structural change needs immediate visibility into how that change affects metrics, definitions, and relationships.

The same system managing the schema needs to manage the meaning.

That’s the shift happening across the market. It changes your approach to data architecture.

Design-Time vs Runtime Semantic Layers: What’s the Difference?

There’s an important distinction here: design-time vs runtime.

Runtime semantic layers sit between the warehouse and consuming tools. They interpret queries and translate them into SQL. They work well—but they’re reactive and only reflect what’s already been deployed.

Design-time semantic layers operate earlier.

They exist where schema decisions are made before anything reaches production. That means:

- changes are visible immediately

- definitions can be validated before deployment

- inconsistencies are caught upstream

Design-time systems describe the data and help it take shape.

And that upstream position creates advantages that downstream tools can’t replicate.

Why the Semantic Layer Is Critical for Enterprise AI Success

AI systems that query structured data depend on semantic context.

Without it, they infer meaning from table names and column structures, which leads to inconsistent results. The problem is that users can’t tell the difference.

With a well-defined semantic layer you get:

- clearly defined metrics

- structured relationships

- encoded business rules

- consistent and auditable outputs

The result is predictable, trustworthy answers.

Every AI system interacting with structured data will run into this problem. The difference is whether it’s solved early or becomes a persistent source of failure.

Modern Data Architecture: Integrating the Semantic Layer with Data Modeling

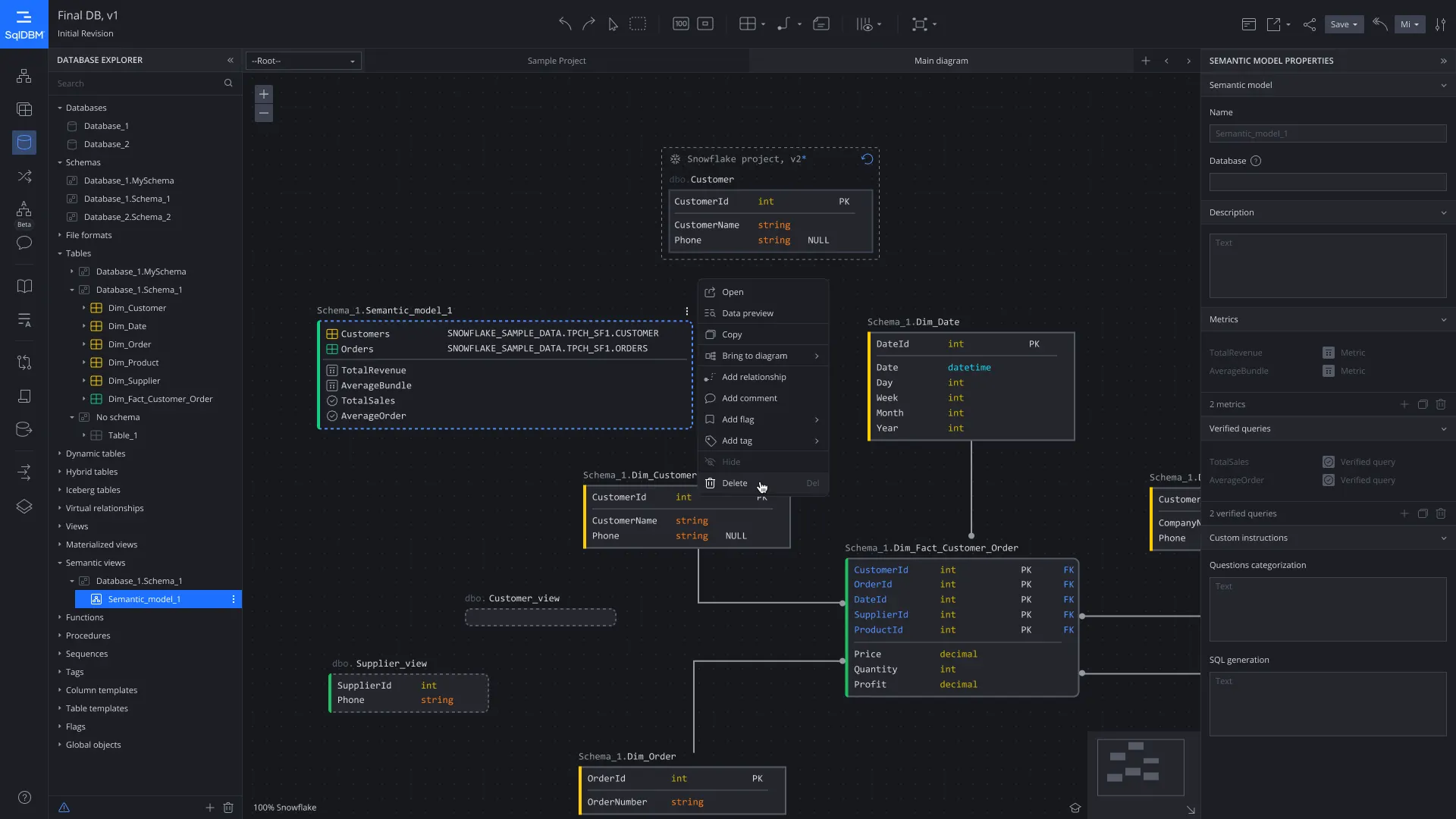

The pattern that’s taking shape looks like this:

The physical model—DDL, relationships, constraints—is designed in a centralized platform with governance, versioning, and lineage.

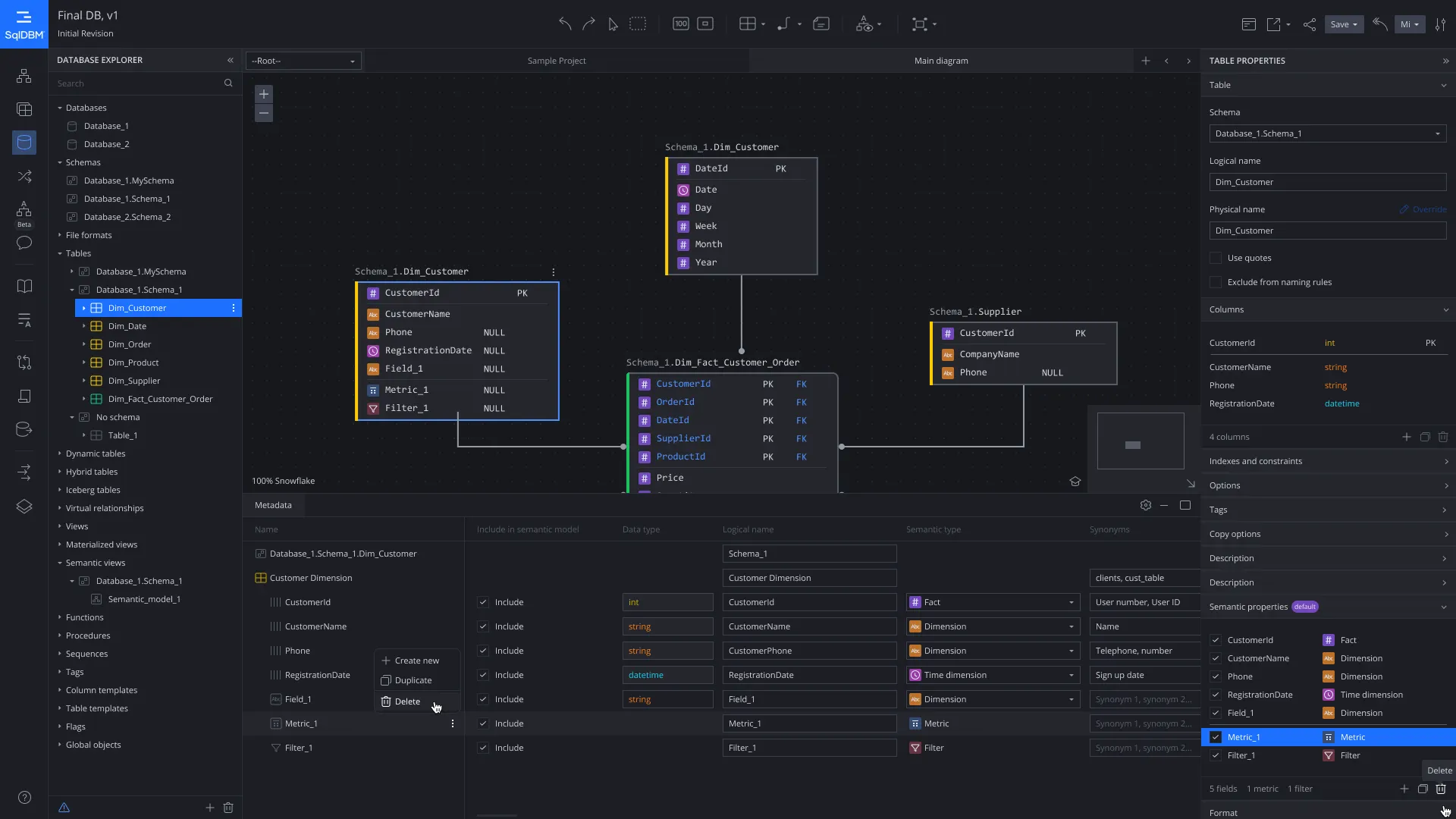

Semantic definitions are created in that same environment:

- classified columns

- defined metrics

- mapped relationships

- applied business context

From there, a machine-readable semantic specification is generated automatically.

That output can be deployed to different systems (Snowflake, dbt, Databricks, or others) without requiring manual duplication.

When the schema changes, the impact is immediately visible. Definitions are updated in the same workflow. The semantic layer stays aligned by design.

No drift, reconciliation cycles, or broken logic discovered after the fact.

How Data Teams Manage Semantic Layers in Modern Data Workflows

This shift changes how teams work.

Previously, the semantic layer was its own project, maintained separately, updated manually, and coordinated across teams through documentation and communication.

In this model, semantics become part of the schema itself.

When you define a table, you define its meaning at the same time. When you add a column, you classify it immediately. When you deploy changes, both structure and semantics move together.

The semantic layer stops being a maintenance burden and becomes a built-in property of the system.

The Business Impact of a Well-Governed Semantic Layer

For data teams, this dramatically reduces maintenance effort.

Manual semantic updates that once took days per cycle is reduced to minutes.

More importantly, it keeps the semantic layer current, something that manual processes struggle to achieve.

For AI use cases, the difference is even more significant.

A system that answers correctly most of the time becomes a productivity tool. One that is unreliable quickly loses adoption.

The semantic layer is what determines which outcome you get.

For governance, embedding semantics into controlled workflows creates a clear audit trail—complete with version history, approvals, and lineage—without requiring separate documentation efforts.

The Future of Semantic Layers in AI and Modern Data Platforms

Several trends will accelerate this shift:

- Multi-target deployment: one semantic definition used across multiple platforms

- Versioning and rollback: tracking and reverting semantic changes like code

- Programmatic access: AI systems querying semantic definitions directly

- Convergence of tools: physical and semantic modeling happening in one place

As organizations scale across platforms and use cases, maintaining separate systems for structure and meaning becomes increasingly impractical.

The Practical Takeaway: How to Build a Semantic Layer for AI-Ready Data Architecture

If you’re thinking about AI readiness, this is the most important layer to focus on.

Not because of hype, but because every AI system depends on accurate, current business definitions.

Those definitions don’t come from the AI model. They come from structured, governed, and defined data.

Organizations that treat semantics as an afterthought will continue to struggle with unreliable AI.

Those that integrate it into their design process will have systems that work: consistently, predictably, and across tools.

The gap between those outcomes isn’t model performance.

It’s semantic infrastructure.

And that infrastructure has to be built where your data architecture is designed.